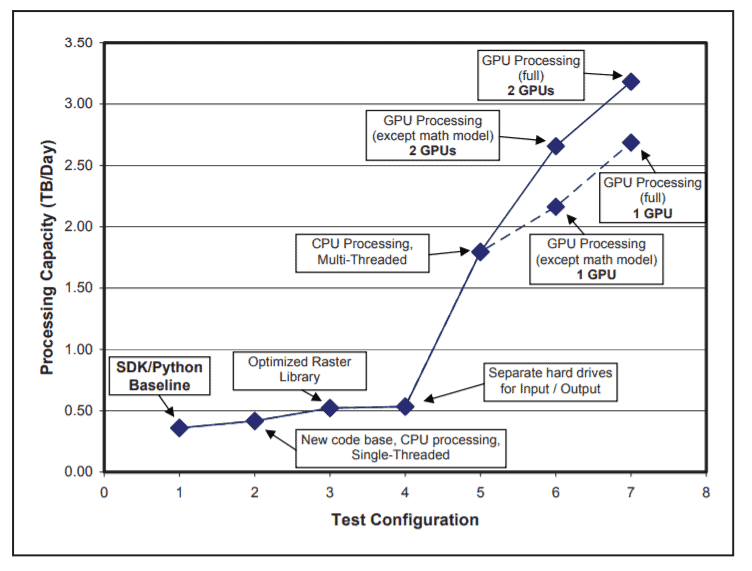

CATALYST has developed new, high-speed, orthorectification functions. The code takes advantage of modern, multi-core processor architecture, as well as NVIDIA’s Graphical Processing Units (GPUs), to process standard data products from the WorldView-1, QuickBird and IKONOS satellites

Results:

Even with no GPUs on board, the system can process 1.75 TB per day as a result of multithreaded processing and improved IO. Using a single GPU, the improvement was still 6 times faster than the Catalyst ortho PPF, and 1.5 times faster than a non-GPU, multi-threaded implementation. This demo shows the potential of the GPU in speeding up problems that are computationally intensive in CATALYST’s field of business (DEM creation, image matching, pan-sharpening).

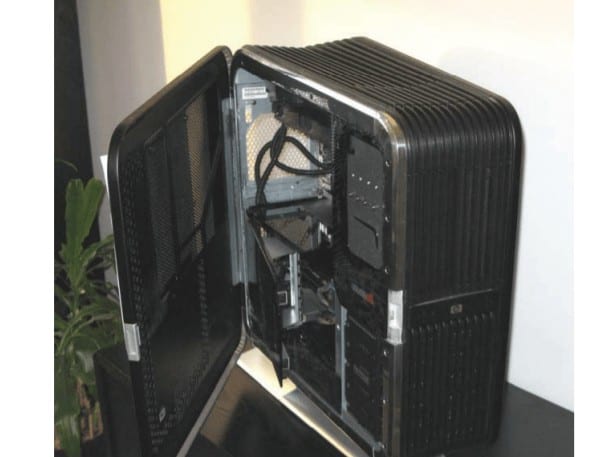

System Deployment

The CATALYST Enterprise system can be deployed on the Cloud much in the same way a non-cloud based CATALYST Enterprise system. The main difference is that there is no need to purchase any physical hardware in order to configure a CATALYST Enterprise system that can achieve the stated throughput requirements. Catalyst nonCloud based CATALYST Enterprise systems are typically deployed on desktop or rack mounted systems, which include a certain set of hardware specifications, including (Catalyst would specify which hardware to purchase): – CPU / GPU – RAM – Disk Storage – UPS – File Server – Network Switch – Operating System

About Catalyst

CATALYST is a PCI Geomatics brand, which has been introduced to put our leading edge technology into the hands of decision makers. We’re a startup – with hundreds of algorithms, scalable solutions, and decades of experience.

As alluded to in the previous sections, the orthorectification module contains a number of tuneable parameters that may be modified by the user at runtime. These parameters include the following: 1. The number of GPUs used 2. The amount of memory available for use on the GPU and on the host 3. The allocation of particular processing steps to the GPU or to the host The objective of performance tuning is to find the combination of parameters that produces the maximum data throughput. At the same time, we can discover how sensitive the system is with respect to the different parameters.

Performance Tuning